I’ve spent most of my professional life looking at the world through technical eyes. Systems. Networks. Data flows. Permissions. Logs. Not theory – reality. How things actually work behind the screen. And once you see it that way, you can’t unsee it.

You notice patterns others don’t. You recognize risks long before they make headlines. You understand how small technical decisions quietly shape people’s lives – often without their knowledge or consent. And over time, one uncomfortable truth becomes impossible to ignore: most people will never see these issues coming.

Not because they’re careless. Not because they’re ignorant. But because they shouldn’t have to be technologists to live safely in a digital society. Yet… here we are.

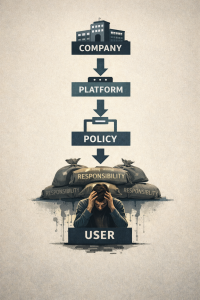

Our lives are now deeply intertwined with systems we don’t control and barely understand. Our data is collected, stored, analyzed, shared, sold (and breached) – often by institutions that insist everything is fine right up until it isn’t. When things go wrong, the responsibility is quietly shifted onto individuals:

Use stronger passwords.

Be more careful.

Read the fine print.

Meanwhile, the systems holding millions – or billions – of lives’ worth of data fail at scale. That disconnect is not accidental, and it’s not sustainable.

To be clear, this is not a blanket condemnation of every company or institution. There are organizations that take privacy and security seriously. There are engineers, compliance officers, and executives with a backbone who genuinely try to do the right thing, even when it’s difficult or expensive, or… inconvenient. They invest in safeguards, question questionable practices, and push back when shortcuts are suggested.

But they are not the whole story.

Too often, privacy becomes negotiable when it collides with quarterly targets. The race for marginal revenue growth, engagement metrics, or shareholder satisfaction routinely overrides common sense, and sometimes bends the law at minimum. Data that never needed to be collected is retained. Access that should be restricted quietly expands. Transparency is replaced with legal fine print. And risk is treated as an acceptable externality, as long as the consequences fall on users rather than balance sheets.

In theory, we already have systems meant to protect the public: regulators, oversight bodies, compliance frameworks, laws. In practice, those systems sometimes fail – through underfunding, outdated mandates, regulatory capture, or simple inertia. When that happens, the gap isn’t filled by clarity. It’s filled by confusion, silence, or corporate messaging. And that’s a problem.

Because someone has a duty to explain what’s happening, why it matters, and who is actually responsible, in plain language, without jargon, without fearmongering, and without assuming technical knowledge.

That’s why Northern Overwatch exists.

Northern Overwatch was founded to be a clear, steady voice in a noisy digital landscape. A place where complex issues, privacy, cybersecurity, surveillance, accountability are translated into something the general public can understand and engage with. Not to tell people what to think, but to give them the information they were never properly given.

This isn’t about panic: it’s about awareness. It’s not about outrage, but accountability. And it’s certainly not about technology for technology’s sake. It’s about people.

We already live in a complicated world. People are busy. They have families, jobs, responsibilities, and enough stress without having to decode technical reports or corporate disclosures just to understand how their data is being used.

Northern Overwatch exists to bridge that gap… to look at issues with technical clarity, ethical grounding, and public interest at the center. Because when systems become powerful enough to shape lives invisibly, someone has to watch. And when no one else is speaking plainly, someone has to explain.

That is the role Northern Overwatch intends to play.